Minimization of scalar function of one or more variables.

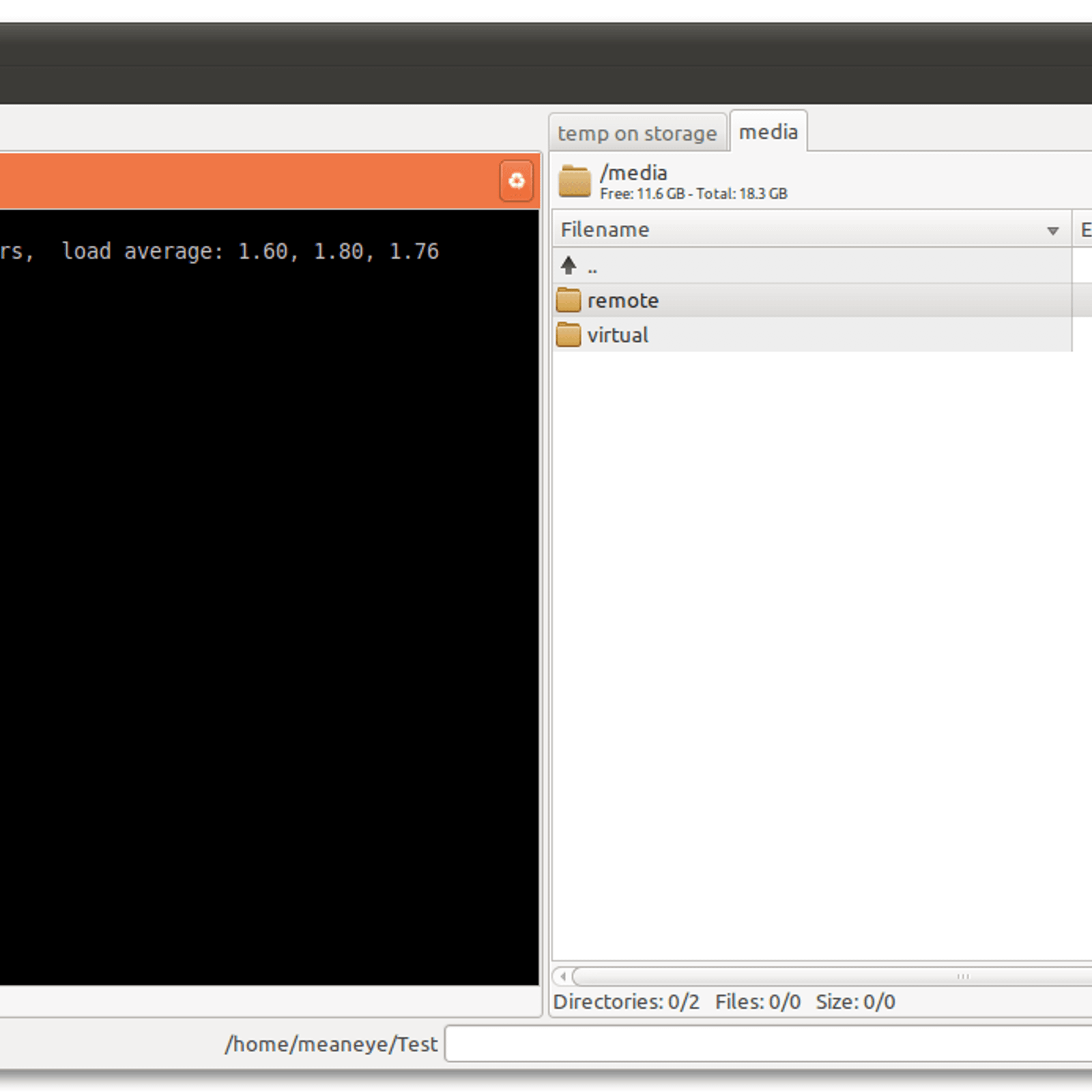

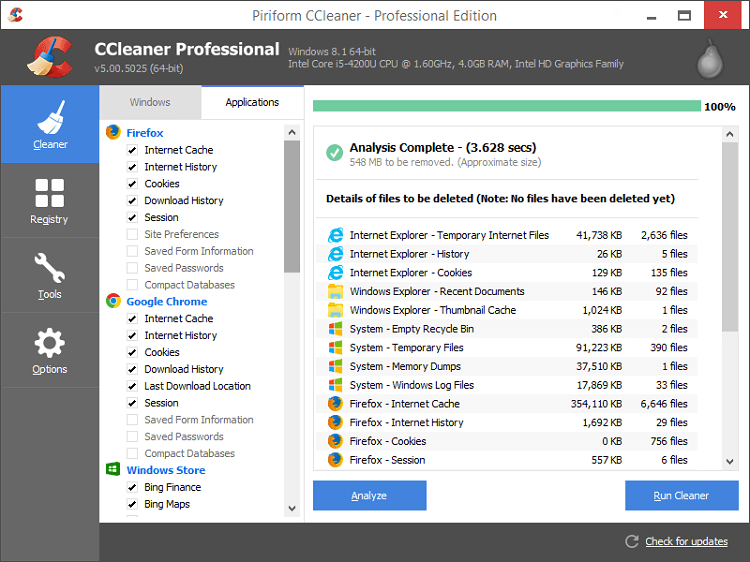

- Twins Mini V1 0 – Minimalistic Duplicate Finder Software

- Twins Mini V1 0 – Minimalistic Duplicate Finder Download

- Twins Mini V1 0 – Minimalistic Duplicate Finder By Name

- Twins Mini V1 0 – Minimalistic Duplicate Finder Free

Although the majority of twins of this type (70%+) are fraternal, the boys’ parents wanted to confirm their relationship—and the only sure way to do that is with twins DNA testing. Their mother, Ally, who writes a lovely parenting blog, told DDC: “It doesn’t really matter to us if they’re identical or not. TWIN STRANGERS SEARCH How do I find my Twin Stranger? A: Firstly, upload a passport style image of your face (looking straight in the camera), then complete your registration by selecting your country, gender, username, password and email address (which we will only use to communicate with you ). Project Impossible Canada V1.0 - Photohaus Galley - Vancouver, Canada - Oct 25 - Nov 1 2013. Project Impossible V2.0 Photos from the People - Science World - Vancouver, Canada - Apr 16 - May 10 2015. Project Instant v3.0 - Science World - Vancouver, Canada - Mar 30 - Apr 26 2016.

The objective function to be minimized.

where

x is an 1-D array with shape (n,) and argsis a tuple of the fixed parameters needed to completelyspecify the function.Initial guess. Array of real elements of size (n,),where ‘n’ is the number of independent variables.

Extra arguments passed to the objective function and itsderivatives (fun, jac and hess functions).

Type of solver. Should be one of

- ‘Nelder-Mead’ (see here)

- ‘Powell’ (see here)

- ‘CG’ (see here)

- ‘BFGS’ (see here)

- ‘Newton-CG’ (see here)

- ‘L-BFGS-B’ (see here)

- ‘TNC’ (see here)

- ‘COBYLA’ (see here)

- ‘SLSQP’ (see here)

- ‘trust-constr’(see here)

- ‘dogleg’ (see here)

- ‘trust-ncg’ (see here)

- ‘trust-exact’ (see here)

- ‘trust-krylov’ (see here)

- custom - a callable object (added in version 0.14.0),see below for description.

If not given, chosen to be one of

BFGS, L-BFGS-B, SLSQP,depending if the problem has constraints or bounds.Method for computing the gradient vector. Only for CG, BFGS,Newton-CG, L-BFGS-B, TNC, SLSQP, dogleg, trust-ncg, trust-krylov,trust-exact and trust-constr.If it is a callable, it should be a function that returns the gradientvector:

where

x is an array with shape (n,) and args is a tuple withthe fixed parameters. If jac is a Boolean and is True, fun isassumed to return and objective and gradient as and (f,g) tuple.Methods ‘Newton-CG’, ‘trust-ncg’, ‘dogleg’, ‘trust-exact’, and‘trust-krylov’ require that either a callable be supplied, or thatfun return the objective and gradient.If None or False, the gradient will be estimated using 2-point finitedifference estimation with an absolute step size.Alternatively, the keywords {‘2-point’, ‘3-point’, ‘cs’} can be usedto select a finite difference scheme for numerical estimation of thegradient with a relative step size. These finite difference schemesobey any specified bounds.Method for computing the Hessian matrix. Only for Newton-CG, dogleg,trust-ncg, trust-krylov, trust-exact and trust-constr. If it iscallable, it should return the Hessian matrix:

hess(x,*args)->{LinearOperator,spmatrix,array},(n,n) Combo cleaner 1 1 8 – antivirus and system optimizer.where x is a (n,) ndarray and args is a tuple with the fixedparameters. LinearOperator and sparse matrix returns areallowed only for ‘trust-constr’ method. Alternatively, the keywords{‘2-point’, ‘3-point’, ‘cs’} select a finite difference schemefor numerical estimation. Or, objects implementing

HessianUpdateStrategy interface can be used to approximatethe Hessian. Available quasi-Newton methods implementingthis interface are:Whenever the gradient is estimated via finite-differences,the Hessian cannot be estimated with options{‘2-point’, ‘3-point’, ‘cs’} and needs to beestimated using one of the quasi-Newton strategies.Finite-difference options {‘2-point’, ‘3-point’, ‘cs’} and

HessianUpdateStrategy are available only for ‘trust-constr’ method.Hessian of objective function times an arbitrary vector p. Only forNewton-CG, trust-ncg, trust-krylov, trust-constr.Only one of hessp or hess needs to be given. If hess isprovided, then hessp will be ignored. hessp must compute theHessian times an arbitrary vector:

hessp(x,p,*args)->ndarrayshape(n,)where x is a (n,) ndarray, p is an arbitrary vector withdimension (n,) and args is a tuple with the fixedparameters.

Bounds, optionalBounds on variables for L-BFGS-B, TNC, SLSQP, Powell, andtrust-constr methods. There are two ways to specify the bounds:

- Instance of

Boundsclass. - Sequence of

(min,max)pairs for each element in x. Noneis used to specify no bound.

Constraints definition (only for COBYLA, SLSQP and trust-constr).Constraints for ‘trust-constr’ are defined as a single object or alist of objects specifying constraints to the optimization problem.Available constraints are:

Constraints for COBYLA, SLSQP are defined as a list of dictionaries.Each dictionary with fields:

Constraint type: ‘eq’ for equality, ‘ineq’ for inequality.

The function defining the constraint.

The Jacobian of fun (only for SLSQP).

Extra arguments to be passed to the function and Jacobian.

Equality constraint means that the constraint function result is tobe zero whereas inequality means that it is to be non-negative.Note that COBYLA only supports inequality constraints.

Tolerance for termination. For detailed control, use solver-specificoptions.

A dictionary of solver options. All methods accept the followinggeneric options:

Maximum number of iterations to perform. Depending on themethod each iteration may use several function evaluations.

Set to True to print convergence messages.

For method-specific options, see

show_options. Patterns 1 1 download free.Called after each iteration. For ‘trust-constr’ it is a callable withthe signature:

where

xk is the current parameter vector. and stateis an OptimizeResult object, with the same fieldsas the ones from the return. If callback returns Truethe algorithm execution is terminated.For all the other methods, the signature is:callback(xk)where

xk is the current parameter vector.The optimization result represented as a

OptimizeResult object.Important attributes are: x the solution array, success aBoolean flag indicating if the optimizer exited successfully andmessage which describes the cause of the termination. SeeOptimizeResult for a description of other attributes.See also

minimize_scalarInterface to minimization algorithms for scalar univariate functions

show_optionsAdditional options accepted by the solvers

Notes

This section describes the available solvers that can be selected by the‘method’ parameter. The default method is BFGS.

Unconstrained minimization

Method Nelder-Mead uses theSimplex algorithm [1], [2]. This algorithm is robust in manyapplications. However, if numerical computation of derivative can betrusted, other algorithms using the first and/or second derivativesinformation might be preferred for their better performance ingeneral.

Method CG uses a nonlinear conjugategradient algorithm by Polak and Ribiere, a variant of theFletcher-Reeves method described in [5] pp.120-122. Only thefirst derivatives are used.

Method BFGS uses the quasi-Newtonmethod of Broyden, Fletcher, Goldfarb, and Shanno (BFGS) [5]pp. 136. It uses the first derivatives only. BFGS has proven goodperformance even for non-smooth optimizations. This method alsoreturns an approximation of the Hessian inverse, stored ashess_inv in the OptimizeResult object.

Method Newton-CG uses aNewton-CG algorithm [5] pp. 168 (also known as the truncatedNewton method). It uses a CG method to the compute the searchdirection. See also TNC method for a box-constrainedminimization with a similar algorithm. Suitable for large-scaleproblems.

Method dogleg uses the dog-legtrust-region algorithm [5] for unconstrained minimization. Thisalgorithm requires the gradient and Hessian; furthermore theHessian is required to be positive definite.

Method trust-ncg uses theNewton conjugate gradient trust-region algorithm [5] forunconstrained minimization. This algorithm requires the gradientand either the Hessian or a function that computes the product ofthe Hessian with a given vector. Suitable for large-scale problems.

Method trust-krylov usesthe Newton GLTR trust-region algorithm [14], [15] for unconstrainedminimization. This algorithm requires the gradientand either the Hessian or a function that computes the product ofthe Hessian with a given vector. Suitable for large-scale problems.On indefinite problems it requires usually less iterations than thetrust-ncg method and is recommended for medium and large-scale problems.

Method trust-exactis a trust-region method for unconstrained minimization in whichquadratic subproblems are solved almost exactly [13]. Thisalgorithm requires the gradient and the Hessian (which isnot required to be positive definite). It is, in manysituations, the Newton method to converge in fewer iteractionand the most recommended for small and medium-size problems.

Bound-Constrained minimization

Method L-BFGS-B uses the L-BFGS-Balgorithm [6], [7] for bound constrained minimization.

Method Powell is a modificationof Powell’s method [3], [4] which is a conjugate directionmethod. It performs sequential one-dimensional minimizations alongeach vector of the directions set (direc field in options andinfo), which is updated at each iteration of the mainminimization loop. The function need not be differentiable, and noderivatives are taken. If bounds are not provided, then anunbounded line search will be used. If bounds are provided andthe initial guess is within the bounds, then every functionevaluation throughout the minimization procedure will be withinthe bounds. If bounds are provided, the initial guess is outsidethe bounds, and direc is full rank (default has full rank), thensome function evaluations during the first iteration may beoutside the bounds, but every function evaluation after the firstiteration will be within the bounds. If direc is not full rank,then some parameters may not be optimized and the solution is notguaranteed to be within the bounds.

Method TNC uses a truncated Newtonalgorithm [5], [8] to minimize a function with variables subjectto bounds. This algorithm uses gradient information; it is alsocalled Newton Conjugate-Gradient. It differs from the Newton-CGmethod described above as it wraps a C implementation and allowseach variable to be given upper and lower bounds.

Constrained Minimization

Method COBYLA uses theConstrained Optimization BY Linear Approximation (COBYLA) method[9], [10], [11]. The algorithm is based on linearapproximations to the objective function and each constraint. Themethod wraps a FORTRAN implementation of the algorithm. Theconstraints functions ‘fun’ may return either a single numberor an array or list of numbers.

Method SLSQP uses SequentialLeast SQuares Programming to minimize a function of severalvariables with any combination of bounds, equality and inequalityconstraints. The method wraps the SLSQP Optimization subroutineoriginally implemented by Dieter Kraft [12]. Note that thewrapper handles infinite values in bounds by converting them intolarge floating values.

Method trust-constr is atrust-region algorithm for constrained optimization. It swichesbetween two implementations depending on the problem definition.It is the most versatile constrained minimization algorithmimplemented in SciPy and the most appropriate for large-scale problems.For equality constrained problems it is an implementation of Byrd-OmojokunTrust-Region SQP method described in [17] and in [5], p. 549. Wheninequality constraints are imposed as well, it swiches to the trust-regioninterior point method described in [16]. This interior point algorithm,in turn, solves inequality constraints by introducing slack variablesand solving a sequence of equality-constrained barrier problemsfor progressively smaller values of the barrier parameter.The previously described equality constrained SQP method isused to solve the subproblems with increasing levels of accuracyas the iterate gets closer to a solution.

Finite-Difference Options

For Method trust-constrthe gradient and the Hessian may be approximated usingthree finite-difference schemes: {‘2-point’, ‘3-point’, ‘cs’}.The scheme ‘cs’ is, potentially, the most accurate but itrequires the function to correctly handles complex inputs and tobe differentiable in the complex plane. The scheme ‘3-point’ is moreaccurate than ‘2-point’ but requires twice as many operations.

Custom minimizers

It may be useful to pass a custom minimization method, for examplewhen using a frontend to this method such as

scipy.optimize.basinhoppingor a different library. You can simply pass a callable as the methodparameter.The callable is called as

method(fun,x0,args,**kwargs,**options)where kwargs corresponds to any other parameters passed to minimize(such as callback, hess, etc.), except the options dict, which hasits contents also passed as method parameters pair by pair. Also, ifjac has been passed as a bool type, jac and fun are mangled so thatfun returns just the function values and jac is converted to a functionreturning the Jacobian. The method shall return an OptimizeResultobject.The provided method callable must be able to accept (and possibly ignore)arbitrary parameters; the set of parameters accepted by

minimize mayexpand in future versions and then these parameters will be passed tothe method. You can find an example in the scipy.optimize tutorial.References

Nelder, J A, and R Mead. 1965. A Simplex Method for FunctionMinimization. The Computer Journal 7: 308-13.

Wright M H. 1996. Direct search methods: Once scorned, nowrespectable, in Numerical Analysis 1995: Proceedings of the 1995Dundee Biennial Conference in Numerical Analysis (Eds. D FGriffiths and G A Watson). Addison Wesley Longman, Harlow, UK.191-208.

Powell, M J D. 1964. An efficient method for finding the minimum ofa function of several variables without calculating derivatives. TheComputer Journal 7: 155-162.

Press W, S A Teukolsky, W T Vetterling and B P Flannery.Numerical Recipes (any edition), Cambridge University Press.

Nocedal, J, and S J Wright. 2006. Numerical Optimization.Springer New York.

Byrd, R H and P Lu and J. Nocedal. 1995. A Limited MemoryAlgorithm for Bound Constrained Optimization. SIAM Journal onScientific and Statistical Computing 16 (5): 1190-1208.

Twins Mini V1 0 – Minimalistic Duplicate Finder Software

Zhu, C and R H Byrd and J Nocedal. 1997. L-BFGS-B: Algorithm778: L-BFGS-B, FORTRAN routines for large scale bound constrainedoptimization. ACM Transactions on Mathematical Software 23 (4):550-560.

Nash, S G. Newton-Type Minimization Via the Lanczos Method.1984. SIAM Journal of Numerical Analysis 21: 770-778.

Powell, M J D. A direct search optimization method that modelsthe objective and constraint functions by linear interpolation.1994. Advances in Optimization and Numerical Analysis, eds. S. Gomezand J-P Hennart, Kluwer Academic (Dordrecht), 51-67.

Powell M J D. Direct search algorithms for optimizationcalculations. 1998. Acta Numerica 7: 287-336.

Powell M J D. A view of algorithms for optimization withoutderivatives. 2007.Cambridge University Technical Report DAMTP2007/NA03

Kraft, D. A software package for sequential quadraticprogramming. 1988. Tech. Rep. DFVLR-FB 88-28, DLR German AerospaceCenter – Institute for Flight Mechanics, Koln, Germany.

Conn, A. R., Gould, N. I., and Toint, P. L.Trust region methods. 2000. Siam. pp. 169-200.

F. Lenders, C. Kirches, A. Potschka: “trlib: A vector-freeimplementation of the GLTR method for iterative solution ofthe trust region problem”, https://arxiv.org/abs/1611.04718

N. Gould, S. Lucidi, M. Roma, P. Toint: “Solving theTrust-Region Subproblem using the Lanczos Method”,SIAM J. Optim., 9(2), 504–525, (1999).

Byrd, Richard H., Mary E. Hribar, and Jorge Nocedal. 1999.An interior point algorithm for large-scale nonlinear programming.SIAM Journal on Optimization 9.4: 877-900.

Lalee, Marucha, Jorge Nocedal, and Todd Plantega. 1998. On theimplementation of an algorithm for large-scale equality constrainedoptimization. SIAM Journal on Optimization 8.3: 682-706.

Examples

Let us consider the problem of minimizing the Rosenbrock function. Thisfunction (and its respective derivatives) is implemented in

rosen(resp. rosen_der, rosen_hess) in the scipy.optimize.A simple application of the Nelder-Mead method is:

Now using the BFGS algorithm, using the first derivative and a fewoptions:

Next, consider a minimization problem with several constraints (namelyExample 16.4 from [5]). The objective function is:

Twins Mini V1 0 – Minimalistic Duplicate Finder Download

There are three constraints defined as:

And variables must be positive, hence the following bounds:

Twins Mini V1 0 – Minimalistic Duplicate Finder By Name

The optimization problem is solved using the SLSQP method as:

Twins Mini V1 0 – Minimalistic Duplicate Finder Free

It should converge to the theoretical solution (1.4 ,1.7).